Most of the provisions under the EU’s Artificial Intelligence Act (AI Act) were originally due to come into force on 2 August 2026. However, on 7 May 2026, the European Union Council presidency and European Parliament negotiators reached a provisional agreement to extend certain implementation deadlines as part of wider efforts to simplify compliance obligations under the regime. While the watermarking compliance deadline for generative AI systems that are not already available will remain as 2 August 2026, other revised deadlines include:

- Transitional period to 2 December 2026 focusing on some of the transparency obligations and a new prohibited AI practice (relating to systems which generate non-consensual, sexual and/or intimate content or abuse material).

- Compliance in relation to high-risk AI systems – 2 December 2027.

- Compliance for high-risk AI systems used as a safety component within a product already regulated by other EU product safety regulation – 2 August 2028.

B P Collins’ corporate and commercial team highlights below what this means for UK businesses given the extra-territorial reach of the AI Act (with the caveat that this is a fast-moving area and further changes are likely).

Key highlights of the AI Act

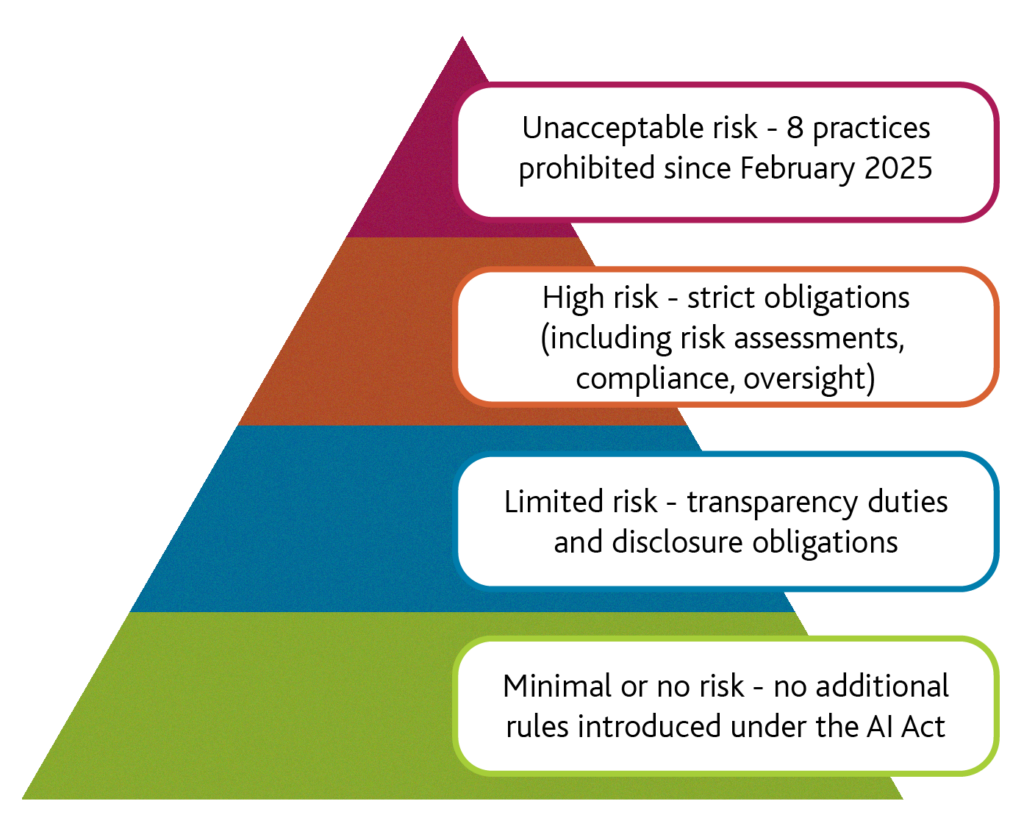

The AI Act follows a risk-based approach and it can be helpful to think of this as a pyramid of four levels of risk:

Expanding on the criteria in greater detail:

High-risk AI systems are permitted but will likely be subject to extensive compliance obligations. High risk AI systems are those used in areas such as recruitment, promotion and performance management, creditworthiness and access to financial services and biometric identification. Some of the compliance requirements including:

- Establishing a lifecycle risk management system to identify and mitigate foreseeable intended-use and misuse risks, to the extent technically feasible.

- Using training, validation and test data that meets quality criteria (relevant, representative, as unbiased as possible, and as error-free/complete as possible) for the intended purpose.

- Maintaining technical documentation demonstrating compliance to support conformity assessment and downstream compliance (the Commission has said it will provide simplified forms for SMEs);

- Enabling automatic record-keeping (event logs) across the system lifetime;

- Providing clear information and instructions for use so deployers can interpret outputs and use the system appropriately, including provider contact details, intended purpose and accuracy.

- Ensuring effective human oversight, including the ability to interrupt or stop the system safely, to reduce intended-use and foreseeable-misuse risks; and

- Maintaining appropriate accuracy, robustness and cybersecurity throughout the system lifetime, including resilience to attacks such as data poisoning and model evasion.

Transparency duties for limited‑risk AI – the AI Act introduces transparency obligations for certain AI systems that interact directly with individuals. These include requirements to disclose that users are engaging with AI rather than a human, and to mark or disclose AI‑generated or manipulated content where relevant to prevent deception.

General-Purpose AI (GPAI) – the AI Act introduces special rules for GPAI models (including large language and generative models). For example, all GPAI model providers must provide technical documentation, comply with EU copyright law and publish a summary of training data sources.

Minimal or no risk – most AI systems are likely to fall into this category. The EU gives the example of an AI spam filter as a system with minimal or no risk. The AI Act does not add new rules for AI with minimal or no risk.

Extra‑territorial scope and what UK businesses need to consider

The AI Act has broad reach beyond the EU. It applies to:

- Providers placing AI systems or general‑purpose AI (GPAI) models on the EU market, irrespective of whether the provider is established in the EU;

- Users (an individual or an entity) AI systems located within the EU; and

- Providers and users established outside the EU where the output of their AI system is used in the EU, unless there is an exemption that applies.

UK businesses that make AI functionality available to EU customers, or whose systems produce outputs used in the EU will therefore fall within scope, (if an exemption doesn’t apply), even if development and hosting occur in the UK or elsewhere. Non-compliance can lead to significant fines, which could be up to the greater of €35million or 7% of global annual turnover for the most serious violations.

If a UK business is caught by the AI Act, they should ensure they are aware of legislative developments and changing deadlines. They should also consider:

- Checking that any use of prohibited AI practices have ceased.

- Checking whether they have any high-risk AI systems and if so, what technical requirements need to be put in place.

- Reviewing and updating (or if not in place already, preparing), user interfaces, notices, and content policies to meet transparency obligations for AI interactions and AI‑generated content.

- Establishing governance, accountability and incident‑readiness including the designation of responsible persons and EU representatives where required.

- For prospective high‑risk systems, initiate conformity planning, data governance remediation, human oversight design, technical documentation, logging and post‑market monitoring frameworks.

- For GPAI providers and integrators, plan model‑level documentation, evaluation and risk mitigation support, and track upcoming codes of practice and harmonised standards.

This list is not exhaustive, but it highlights key action points for UK businesses likely to fall within the scope of the AI Act. The EU has published a compliance checker tool that can be used to help understand which rules may apply to your AI system.

As stated at the start of this article, changes to the AI Act are likely and more will be known once the new proposed Digital Omnibus on AI regulation comes in, which should be around August time. For further advice and information on how it could affect you, please email enquiries@bpcollins.co.uk or call 01753 889995.